FIELD NOTE

The Problem with AI insights, Part 4: Using Relational Design to Develop the Right User/Tool Balance

Paul Hartley

The AI turn is now reaching a fevered pitch. Everyday we are bombarded with claims of the AI future being fulfilled by the dazzling capabilities of some new chat bot. But even a casual observer can see these publically available AI tools, while initially startling in their capacities, are actually a little dull and flat when used in areas requiring more precision, transparency, and flexibility.

They summarize websites and business information. They generate images, bad emails, and creepy videos. And they churn out crap pop tracks that spam Spotify. While these are all undeniably amazing feats of computing, they are the fulfillment of ideas knocking around in the AI space since the 1970s and before. These are the same problems AI researchers have been working on for decades. While cool, they are not that useful to the average user, hardly stand as examples of their ability to engage in more technical tasks without a serious design and engineering effort to adapt them to purpose. Even in the case of using an AI tool stack in ethnographic analysis we have been exploring in the previous articles, the utility of AI tools is questionable.

The current crop of widely available AI tools are providing nothing more than a proof of concept for what is to come. But to really grasp the value of what AI can do, and unlock this future usefulness, we have to begin to modify our thinking regarding what these tools are for, how they can help humans do things, and what we might do together that we were unable to do as human users only. This will require new approaches to design, ones that can help us see beyond how we did things in the past.

Novel ideas are not easy to come by, and the problem of a distinct lack of them emanates from the very core of AI development: the idea of artificial intelligence itself. The reason we are stuck in this lacklustre, antiquated thinking is the result of the dominance of a long-held goal in AI: its purpose is to fulfill the promise that a machine can do what a human being can do (possibly even better). For more than one-hundred years, the idea of a super-intelligent machine has been grounded in its ability to compete with humans and better them. The AI world has been so focused on the Turing test, on Skynet, and on the Matrix, that they forgot to ask if it is even worthwhile to have a machine do what a human can do, just faster, cleaner, and with more precision.

This idea is the driving force behind the development of AI, and as a result everything we ask it to do is bumping up against what humans are already doing. We’re trying to recreate or better humans, when we should be looking to create complimentary AI systems. If we let go of the idea of a super-intelligent system doing human-like things, there is an entire world of possibilities waiting for us. Having only this one goal, no matter how broad it is, is stopping us from doing something more interesting.

We need new goals to help us define what an AI tool can do that is really useful. Rather than trying to have a machine compete with humans and demonstrate how cleverly it has been constructed, it might be better to expect AI tools to become integrated into tasks, and ask how it enters into useful relationships with users. The goal of an AI tool perfectly tuned to its task or user is a good one. It immediately does away with the needless threats to human agency and labour, and it provides the AI tool with a set of clearly defined design parameters. We see this in the most impressive examples of medical diagnostics using AI.

Task-based design approaches are not new either. They have already been part of automation system design since the 1990s. While a task-based approach would be a step in the right direction, it would not take AI system’s inherent flexibility into consideration. A task-based design approach would prevent us from seeing an AI system as a tool capable of a number of tasks, or even the ability to learn a new one.

A better goal would be to define an AI tool’s workings and structure by first deciding what relationship we would like to have with it. Using user-centric relationships as the starting point for defining the purpose of an AI tool would mean we can unlock AI’s potential as a support infrastructure for people. This too eliminates fears around the loss of agency or interference with human labour. It allows us to dream larger. We can ask questions of what the AI looks like if we want to tackle a task in a dialogic partnership, or to use the AI tool to harness the power of a collective user (more than one person) rather than an individual. We will be able to do things with the aid of AI tools that we, as humans, have not been capable of before.

To achieve this relational goal we need a new design language to define the characteristics of the tool and outline how it fits into a symbiotic relationship with a user. We have to peer into the wild and woolly world of things not yet attempted. Doing this will require a form of relational design that allows us to define the role, function, and features of an AI tool in dialogue with the task, and how the tool works with the user(s) to accomplish it.

Relational design establishes how an AI tool helps a user

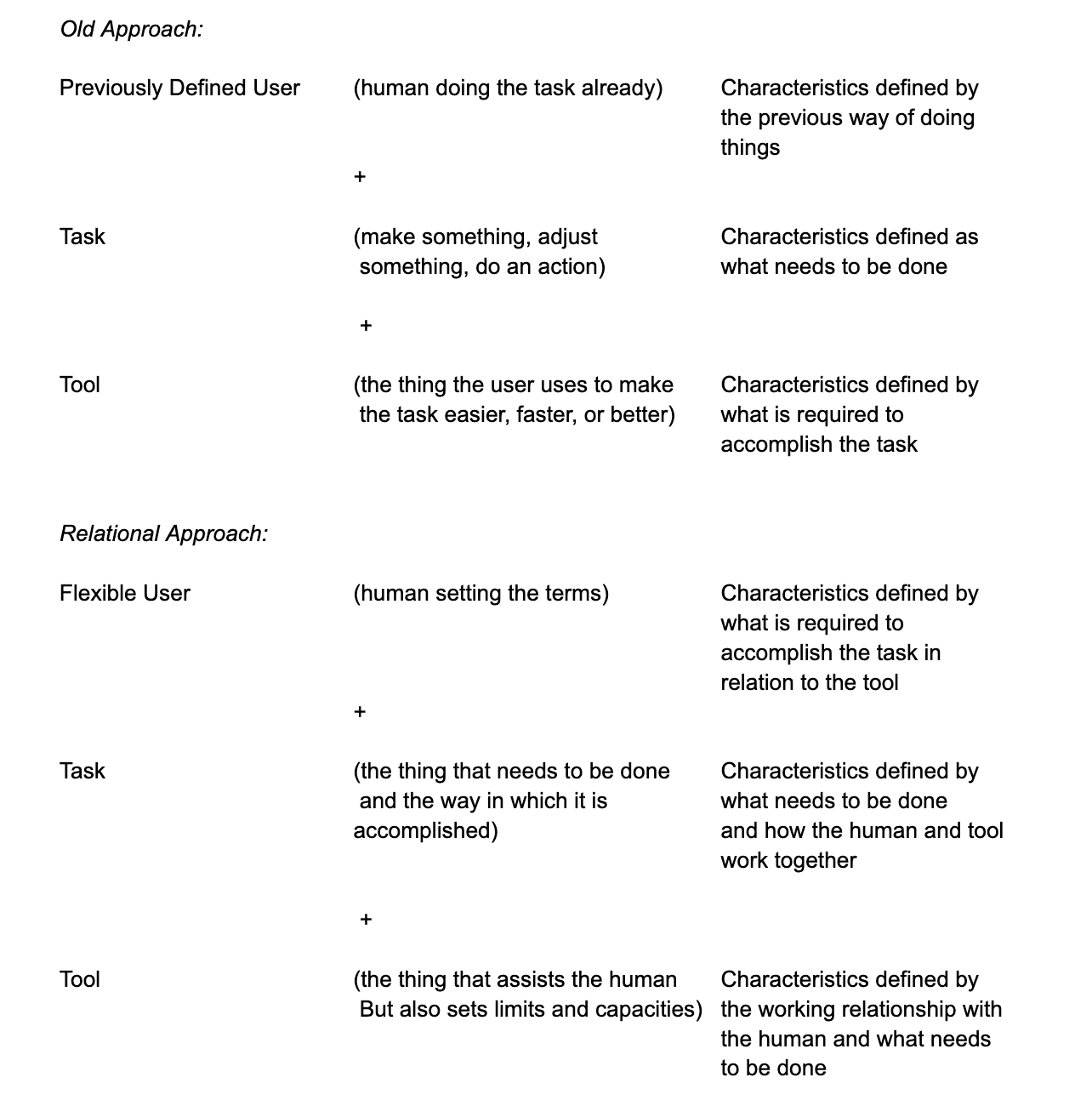

In any work there is a user, a task, and a tool. The three have to be aligned with each other for anything useful to happen. Older design approaches assumed a static concept of a user and task, looking to build a tool for the user to use to accomplish the task. But because AI is more flexible than something like a screwdriver, hammer, or a calculator, it allows us to be more fluid and creative with the optimization of all three elements.. We can design the entire system at the same time, meaning the user and task are also open for scrutiny and development.

Within the context of complex analysis, we need to take a different view of what we are building. Designing an AI system capable of something like enhancing behavioural research and analysis according to the principles we’ve described in the last three articles, requires developing the tool (the AI system), the task (the research methodology) and role of the user (the researcher’s process, skills, and capacities) all at the same time. Accomplishing this task requires a design procedure capable of being sensitive to three shifting elements simultaneously. For this, we need an approach to designing tools, tasks, and roles by grounding them in relationships.

We describe this process as relational design. It is something new because these dynamics were less open to alteration. But it will be a major part of our AI future, because if we cannot become good at defining appropriate working relationships with these tools, we will never unlock their potential. We have described the details of relational design elsewhere [link]. Relational design is not entirely simple because it asks us to be creative about features of these three elements that we never had to consider before.

For our purposes here, it is sufficient to describe it in this way. Relational design is a process of optimizing the working relationships between a user, task, and tool—the three components in doing (well) anything beyond what a human body can accomplish alone. This design approach is relational because it prioritizes and scrutinizes the relationship between these three elements to define the role and workings of each. The relationships between them help define what they should be. So the design process begins with how the network works together and ends with designed roles, features, structure, and working approach.

In the old way of designing a tool to help the human accomplish a task, the human and the task were understood, their characteristics and expectations defined by how it was done in the past. The human had the knowledge, the task was defined by the human, and the tool was built to fit into that understanding. This is, and will always be, a perfectly functional way to do this. And many tools, even computers, were, and will be designed according to this logic.

Given that AI is a flexible tool, employing it properly in the task of helping a researcher maximize the potential of their insights about other people, we have to provide it with some features, a role in the process, and limits on its workings. To do this, we have to clearly define what it will do and also how it will augment the human being’s abilities, or even replace some of them. In the past, tools have been limited by their form and function. Humans would build them for a specific task, and they were only just capable of that task. The tools were limited and could cease to be useful if these limits were exceeded—think breakages, ruined work, damaged users. Also, tools would not evolve by themselves without human interference. But because AI is so flexible, and its limitations are difficult to see, we need to define it through other means. Its limitations are not sufficient.

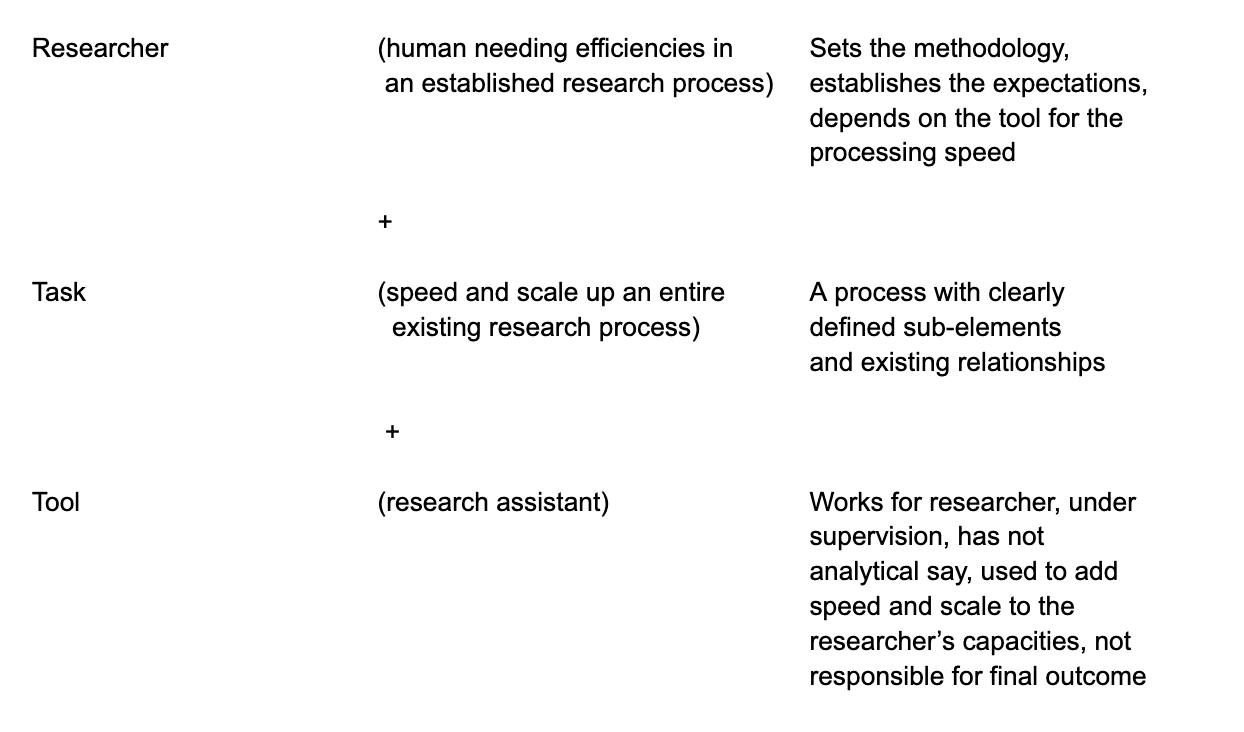

A relational design perspective allows us to create this definition by fixing AI as a suitable tool in a user-task-tool model. The user is the researcher. AI is the tool. The methodological and procedural constraints of insights development define the task. The relationships that exist between the user and the task, the task and the tool, and the user and the tool, provide the ground for settling what needs to be done, how it needs to be done, and who does what. Within relational design, establishing the exact nature of these relationships, allows us to define what these three elements are, and what they do. Following the examples in the other articles, we’ll consider this with the role of a researcher working with an AI tool.

We are going to assume that we know who the researcher will be. And we can also assume we know what the method and process of the tasks are (we discussed these in the previous articles), thus we can use relational design to settle what the AI should be as an appropriate tool and how it should work with the user (the researcher). The benefit of doing it this way, means we can optimize the way of working around the user’s needs and the task at hand, while also providing the limits and definitional characteristics required for the best use of an AI system.

By setting the user/researcher and tool relationship as that of supervisor and research assistant, we immediately have a set of characteristics for the tool. It works for the user, meaning it has a supervisor. All of its tasks are proscribed by the unequal structure and requirement of oversight. It has to be transparent in its work, follow instructions, and deliver only what is required. But it does have the ability to be creative within its remit. It is not responsible for the final outcome and is not the analyst, meaning it must be predictable, careful, thorough, and open in its work.

With this in mind, we can turn to the relationship between the task and the tool. The research method is already defined by the researcher, and the expectations of the researcher establishes the scale and requirements of the task. Once this is set, the tool/research assistant is used to expand the scale and scope of the researcher, meaning its primary responsibility is to expand the researcher’s ability to manage the task. The tool works on the task within very strict guidelines, and only works on one element at a time before reporting it’s work. Meaning, it is not responsible for the entire task, just the part it has been given. It is also not responsible for analysis or the final outcome, meaning it shares the overall task with the researcher.

This is the core of relationship design: we use the relationships between the elements to provide the definitional characteristics of each of the elements. The tool is entirely defined by the other elements and the role it is expected to play. If we had just assumed it would be an AI tool, then the feature set would be a lot harder to describe. Once the relationships and roles are defined, the entire complex is easier to describe and the feature set of the tool is simply the logical conclusion of what its role is, what it is expected to do, how it is to work with the user, and what it is asked to tackle. Relationships allow us to give the tool a social role. Much of what it must be and how it is to work is encoded in the simple descriptions of this role and the relationships that give it meaning.

Moving from ‘Centaur’ to a more useful basic relationship

An AI system can seem to operate as a teacher, mentor, friend, sycophant, lover, employee, inner monologue, and practically anything else. None of these roles are appropriate in the development of insights about a group of humans (the research subjects in an ethnographic study). It can neither understand or nor experience them in their full detail. So, it cannot operate as a full-blown actor in this network of people. It has to be contained entirely under the control of the researcher.

This places the AI tool firmly in the category of an extension of the agency and action of the user (researcher), making it more employee, analyst, or potentially inner-monologue. It is something the user uses to get the task done—be it hammering in a nail, analyzing human behaviour, or printing microchips—not something that helps define that task, or work independently.

In the AI context, one way of referring to this relationship was as a ‘centaur,’ a hybrid of human and machine working together to accomplish a task. While this term has a long history, one of the most enthusiastic proponents of its idea was Gary Kasparov, the chess grand master, who saw the potential of this relationship after his famous games against IBM’s DeepBlue computer in 1996 and 1997. The idea of a form of chess grounded in a human and a machine working together was formed from his realization that DeepBlue was not conceptualizing the game as he would, and therefore was making radically different choices. This fact is now common knowledge to chess players because this centaur approach is now baked into chess game engines and the idea of ideal machine moves. But since the task of playing chess is quite limited, the centaur is an easy relationship to build. The computer and the user are indeed trying to do the same thing, and so when they do it together, they are a true hybrid.

But this half-human, half-machine hybridity is not so clear when the task is more complex. The ‘centaur’ metaphor does not work because it oversimplifies the relationship between the user and the tool. So we must look to be more precise.

The terms of the relationship between the user/tool working on the task has to include a hierarchy. The human’s analytical position must be prioritized. This means the human dictates the work, the goals, and the outcome. They also supervise the tool as it completes its work. So, the characteristics of the relationship between the user and the tool is grounded in command and control and features supervisory and evaluatory elements. The tool is constrained by the relationship, and cannot exceed these limits without permission and discussion. Since it is not the tool that signs the work, this relationship is a hierarchical partnership. The AI tool is more research assistant than anything else.

This then means the task has to be reconceptualized to include control and evaluation touch points. It also requires the tool to work in an entirely transparent manner, showing its work so it can be entirely scrutinized and revisited for adjustment.

OTHER FIELD NOTES